|

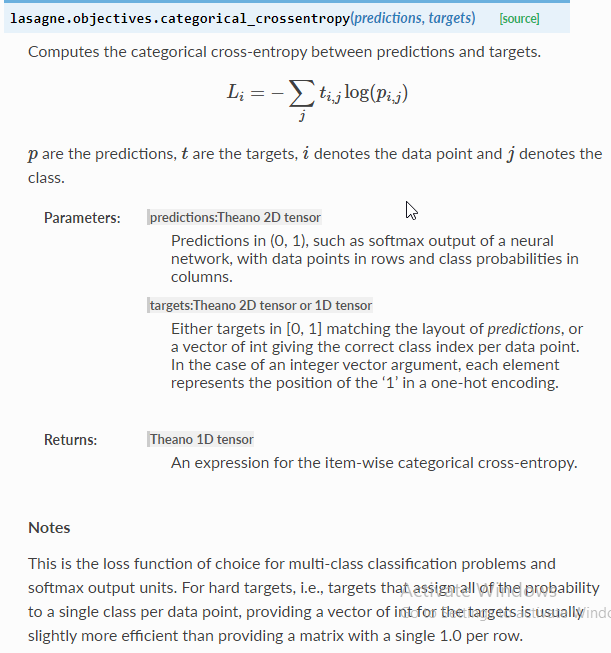

8/28/2023 0 Comments Pytorch cross entropy lossWe will further take a deep dive into how PyTorch exposes these loss functions to users as part of its nn module API by building a custom one. In this article, we are going to explore these different loss functions which are part of the PyTorch nn module. Speaking of types of loss functions, there are several of these loss functions which have been developed over the years, each suited to be used for a particular training task. Knowing how well a model is doing on a particular dataset gives the developer insights into making a lot of decisions during training such as using a new, more powerful model or even changing the loss function itself to a different type. In layman terms, a loss function is a mathematical function or expression used to measure how well a model is doing on some dataset. I think ML developers are smart enough to put their big boy pants on and use an explicit output activation function.Loss functions are fundamental in ML model training, and, in most machine learning projects, there is no way to drive your model into making correct predictions without a loss function. The CrossEntropyLoss() with no output activation function approach was introduced sometime around PyTorch version 1.0 a couple of years ago in order to make multi-class classification easier. With CrossEntropyLoss(), the output values are raw logits, which could be any values, so you apply softmax() to get values that sum to 1. With NLLLoss(), the output values are log-probabilities so you apply the exp() function to remove the log. Optimizer = T.optim.SGD(net.parameters(), lr=lrn_rate)Īfter training, if you want to make a prediction where the output values are pseudo-probabilities that sum to 1.0, the two choices are: # loss_func = T.nn.CrossEntropyLoss() # no activation # loss_func = T.nn.NLLLoss() # assumes log_softmax() Z = T.nn.Identity()(self.oupt(z)) # no activationīut there’s no built-in identity() function so the code is very ugly.įor training, the two choices for optimizer are: PyTorch does have an explicit Identity module you can use: This is what I don’t like about the CrossEntropyLoss() approach - the no activation sort of looks like a mistake, even though it isn’t. Notice that for NLLLoss() I use log_softmax() output activation and for CrossEntropyLoss() I use no activation (sometimes called identity activation). # z = self.oupt(z) # no activation for CrossEntropyLoss() # z = T.log_softmax(self.oupt(z), dim=1) # for NLLLoss() The no-output activation with CrossEntropyLoss() just doesn’t look as nice. I was mentally comparing the two approaches and decided that the NLLLoss() (“negative log likelihood loss”) with log_softmax() output activation has one tiny advantage over the CrossEntropyLoss() with no activation approach. Using NLLLoss() with log_softmax() output activation (left) and using CrossEntropyLoss() with no output activation (right) give the exact same results.

Internally, the two approaches are identical and you get the exact same results using the two approaches.

If you create a PyTorch library neural network multi-class classifier, you can use NLLLoss() loss function with log_softmax() output activation, or you can use CrossEntropyLoss() loss with identity (in other words none) output activation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed